What is a foundation model and why is transparency important?

Diving into the recently published transparency index and pondering where to from here

If you are following the field of generative AI at more than a SkyNet and killer robots level, you will likely have heard the term ‘foundation model’ being used in conversation. With the release last week of the Foundation Model Transparency Index, it felt like a good time to check my mental models for this concept and learn a little more about both past and future.

What is a foundation model?

If ‘foundation model’ is a phrase you’ve used in conversation but not examined your understanding of (kinda like multi tenancy or customer data platform 😎), the history is interesting, and perhaps surprisingly long.

Back in August 2018, the Stanford Institute for Human-Centered Artificial Intelligence convened a new interdisciplinary Center for Research on Foundation Models.

In recent years, a new successful paradigm for building AI systems has emerged: Train one model on a huge amount of data and adapt it to many applications. We call such a model a foundation model.

Website of the HAI Centre for Research on Foundation Models

As you would imagine, this name wasn’t chosen lightly. It was specifically intended to be broader than the large language models or LLMs with which it is often associated. The Center collaborators considered and rejected: base model, broadbase model, inframodel, platform model, task-agnostic model, polymodel, pluripotent model, generalist model, universal model and multi-purpose model. Bravo to rejecting ‘pluripotent model’ - imagine trying to say that regularly in conversation!

Further expanding on the choice of ‘foundation model’, I found this paragraph particularly enlightening. A good one to read, re read and ponder.

We take “foundation” to designate the function of these models: a foundation is built first and it alone is fundamentally unfinished, requiring (possibly substantial) subsequent building to be useful. “Foundation” also conveys the gravity of building durable, robust, and reliable bedrock through deliberate and judicious action.

The authors also call out that they specifically and intentionally chose ‘foundation’ not ‘foundational’. While foundational implies solid and fundamental, perhaps principles based, you can have shaky foundations!

So when you think foundation models, think about the foundations of a building. Very important if you intend to build a substantial and expensive dwelling on top of them. But by no means guaranteed to be robust and fit for purpose for every building project.

Also of note is the date that the centre was established, August 2018. So more than five years ago, pre-pandemic and substantially longer than research on the average PhD thesis, foundation models (the Center launch materials reference GPT-3 and BERT among others) were sufficiently substantial on the landscape of the global research community to warrant a center dedicated to “interdisciplinary research that lays the groundwork of how foundation models should be built to make them more efficient, robust, interpretable, multimodal, and ethically sound.” An interesting counterpoint to the common current perception of extraordinary amounts of progress jammed into the last 12 months.

If you will excuse the pun, that has hopefully shored up our collective understanding of the phrase ‘foundation model’. Now on to the recently announced transparency index.

The Transparency Index

I talk to many folk these days who have an interest in the fields of AI safety and AI risk. Windows into both would be opened by something like a widely adopted transparency index. Could this be it?

While the societal impact of these models is rising, transparency is on the decline. If this trend continues, foundation models could become just as opaque as social media platforms and other previous technologies, replicating their failure modes.

The Foundation Model Transparency Index website, Oct 2023

My own experience, and that of others I speak to, is that transparency into how models are built, what they are built from, the architecture of any model and the evaluation of its abilities and limitations has decreased markedly over the past eight years or so. Decreasing transparency, while great for protecting commercial interests, makes it harder for downstream businesses - from startup to enterprise - to confidently build applications that rely on commercial foundation models. This is particularly true for businesses with safety concerns or ethical commitments, including those in Australia where reporting requirements for listed companies already include metrics associated with exposing and combatting modern slavery and soon, climate change.

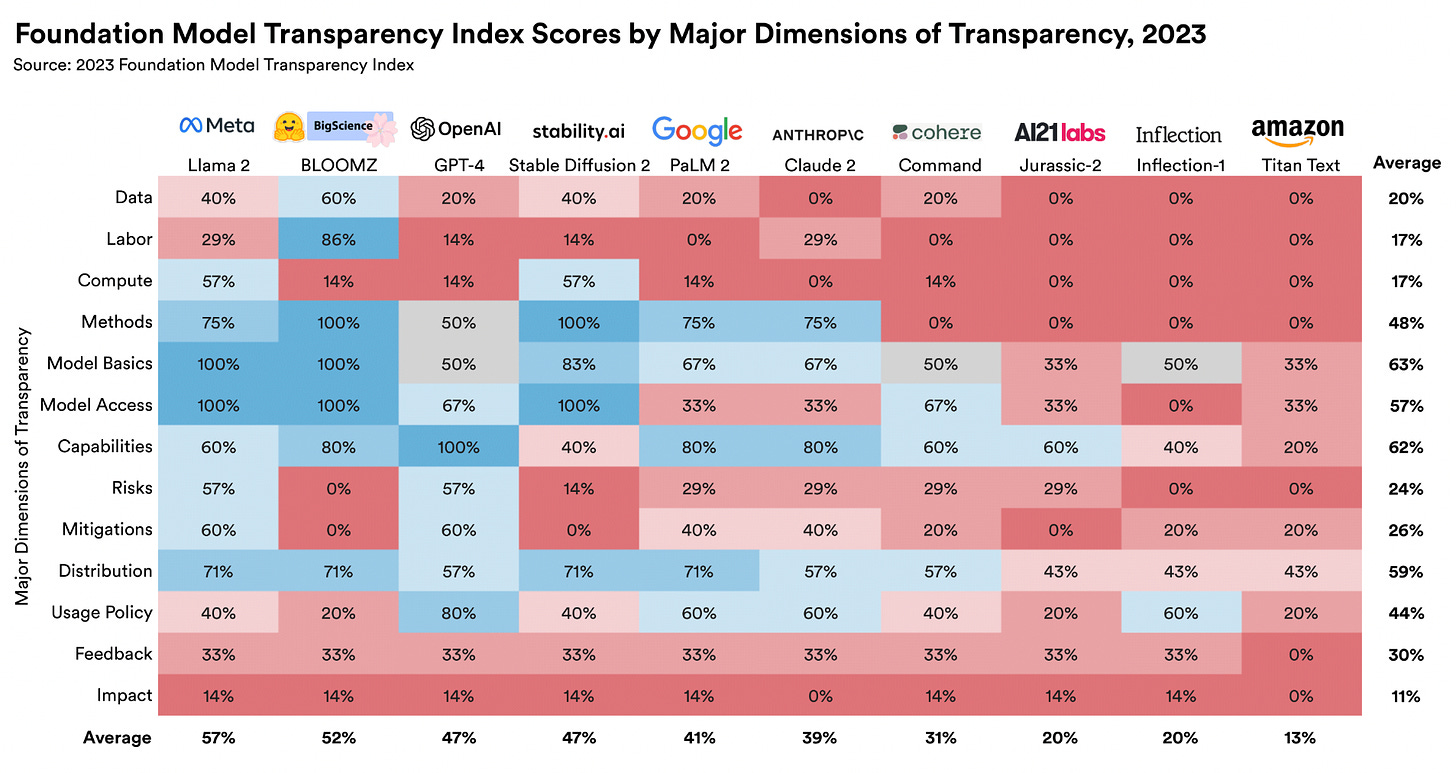

To build the index, the team developed 100 different indicators of transparency. These criteria are derived from the AI literature as well as from the social media arena, which has a more mature set of practices around consumer protection.

To rate the top model creators, the research team used a structured search protocol to collect publicly available information about each company’s leading foundation model.

After the team came up with a first draft of the FMTI ratings, they gave the companies an opportunity to respond. The team then reviewed the company rebuttals and made modifications when appropriate.

The 100 indicators are grouped into three domains and only one of those focusses on the model itself, reflecting the broad ecosystem impact of foundation models.

Upstream (32 indicators) - “the ingredients and processes involved”

Includes such things as disclosure of data sources, licensing and copyright status, disclosure of wages and labour protections for any data labelers who worked on the data curation, environmental impacts like energy usage & carbon emissionsModel (33 indicators) - “the properties and functions of the model

Includes such things as the model architecture and its documentation, the rigour of capability evaluation, model risk disclosure and unintentional harm evaluationDownstream (35 indicators) - “the distribution & usage of the model”

Includes such things as the protocol for release, machine generated content detection approaches, model behaviour enforcement protocol, mechanisms for redress of harm, disclosure of permitted and prohibited use of user data

Out of 100 indicators, 82 of them are satisfied by at least one of the 10 leading developers scored, suggesting that much greater transparency is already possible if it can be motivated as desirable.

I found this point both fascinating and really heartening. As a down stream enterprise scale user and certainly as a purchaser of model outputs, I see the transparency index as providing both a framework for conversation with my vendors and a feasible way to vote with my purchasing dollars to support dimensions of transparency that I and/or my customer base see as particularly important.

If, as seems likely, model capability converges over the medium term future, having these additional dimensions on which to base a purchasing or partnership decision will increase in relevance.

And at a 10,000ft and global policy level, serious engagement in increasing transparency provides a concrete way for the mega vendors to contribute to reducing present day harm while making the potential future harms that have been grabbing headlines and media attention genuinely less likely.

As the index authors note, one of the significant contributions of this work is in providing a taxonomy, or if you prefer a framework for productive conversation. Like anything that tries to simplify a complex space, the index won’t be perfect and it will be subject to index washing and gamification. But I’m a fan of the fact that it removes or at least greatly reduces one of the most seductive excuses for not doing anything at all - overwhelm at seemingly intractable complexity.

Strategically, our aim is for the index to clarify discourse on foundation models and AI that is muddled and lacks grounding.

Research paper accompanying the release of the FMTI, October 2023

The results as at today are of course substantially less important than the emergence of a framework that can be used to compare and contrast - and to encourage good behaviour. Here’s to more substantive action and less hang ringing!

And if this peek has peaked your interest, the Center’s webpage, the Transparency Index landing page and the research paper accompanying the index release are all accessibly written and well worth diving into. Also one of the paper authors,

, is a co-author of the excellent blog on Substack which you should consider adding to your weekly reads.One more thought to take into your weekend

Emissions from global cloud computing now likely exceed those from commercial aviation. But while we’re familiar with being asked to ‘carbon offset’ our business and holiday travel and many of us have changed work and vacation habits to reduce our flight footprint, few would yet be pausing to consider whether to not make that Google search or ChatGPT query.

Numbers can be fudged and great headlines have been known to triumph over fact checking but it’s safe to say that there’s no (carbon emissions) free lunch when it comes to the increasing compute usage powering our online experiences. So it was heartening and interesting to read about research at Lincoln Laboratory and others aimed at ways to reduce power, train and run models efficiently and make energy use more transparent.

Training is just one part of an AI model's emissions. The largest contributor to emissions over time is model inference, or the process of running the model live, like when a user chats with ChatGPT. To respond quickly, these models use redundant hardware, running all the time, waiting for a user to ask a question.

One way to improve inference efficiency is to use the most appropriate hardware. Also with Northeastern University, the team created an optimizer that matches a model with the most carbon-efficient mix of hardware, such as high-power GPUs for the computationally intense parts of inference and low-power central processing units (CPUs) for the less-demanding aspects. This work recently won the best paper award at the International ACM Symposium on High-Performance Parallel and Distributed Computing.

Using this optimizer can decrease energy use by 10-20 percent while still meeting the same "quality-of-service target" (how quickly the model can respond).

New tools are available to help reduce the energy that AI models devour, MIT News

Wishing you a peaceful and productive weekend, I’m off to mulch around my baby trees and install a shade sail ahead of what is likely to be a record breaking summer here in Melbourne.

Fantastic read, Kendra! Thanks for taking the time to write it up.