Data and AI in climate change mitigation

tldr; we need to go hard on the data bit so we can get to the AI part before time runs out

As long time readers will know, one of the reasons I write this blog is that it brings some much needed discipline to learning from what I’m reading about! I read more deeply, with more feedback loops and greater attention to details, internal consistencies and patterns than when I’m reading purely for pleasure or to pass the time while waiting. What that means for you dear reader, is that you’ll hear more or less about a topic in clusters. Explore along with me or wait a week or two, and just like the weather in Melbourne, topics will likely change to something closer to your personal passion.

The weather in the state of Victoria over the Christmas / New Year break (appalling at approaching biblical scale) could hardly fail to nudge me back towards climate change. For those with some knowledge of the world down under - when Brisbane weather comes to Melbourne for the holidays, you sit up and take notice!

I’ve commented before that I get mildly irritated when folk throw around phrases like ‘AI can help with climate change’ so it was with a wee bit of trepidation and a good deal of resolve, that I sat down to deep dive into the Innovation for Cool Earth Forum’s recently released AI for Climate Change Mitigation Roadmap. However if you share my wariness for hyperbole, you’ll like this one. It is specific, upfront about challenges and detailed enough to help someone new to the field learn a lot.

My only complaint is that certain sections (it’s a compilation of chapters by different authors as is common in collaborative documents created from conferences / symposia) use ‘AI’ a lot where substitution of the word ‘data’ would leave you with the same intent (more on that later). Quibbles aside, a useful read.

The first point of specificity, which definitely drew me in, is actually in the title. If you go back and read it more carefully, you’ll see that they are focussing on climate change mitigation, not, for instance, AI contributing to climate change adaptation, which I think is where a lot of the ‘handwave’-ier pundits / articles tend to focus. The roadmap, all 131 pages of it, explores the question “Can AI help cut emissions of greenhouse gases?”. Nice. Focussed. Important and very time critical!

My summary of the topics covered: How can data and/or AI be put to good use in:

Monitoring emissions (a surprisingly important topic!)

Cutting emissions in some of the largest existing categories - transport, agriculture, electricity generation and manufacturing

Materials innovation in energy storage technology

131 pages is a lot. Some points that caught my eye / tickled my fancy / gave me pause for thought are covered below. I didn’t get into the materials innovation section as I’ve written about this quite recently when I was looking at proteins and AI.

It’s all about the data

As I’ve been chatting to industry folk in the last few months, one topic that comes up again and again, is that the energy sector - generation and distribution - just isn’t as digitised as the casual observer might expect. I’d be shocked if this wasn’t also true in manufacturing (although hat tip to mining for being ahead of the 8 ball on this because of their investment in optimisation and preventative maintenance).

So this section of the report on Data Availability was quite poignant (emphasis in the box below is mine).

The term “data” loosely refers to some amount of measured information. But for AI applications, the way in which data are measured and digitized matters.

Measured and well-digitized Properly designed and deployed instrumentation will provide high-quality data that can power AI applications. Such data typically exhibit a high degree of spatial and temporal resolution, covering relevant areas in sufficient precision over an appropriate number of experiments and amount of time. Examples include industrial production data, high-fidelity weather data and fine-resolution satellite data.

Measured but poorly digitized. Data where instrumentation is either insufficient or improperly configured may not be able to drive successful AI applications. These cases can occur in underfunded application areas (biodiversity studies), rapidly changing application areas (agriculture) and broader geographies (weather data in developing nations). For example, digitizing the monthly total energy usage at the building-level is not sufficient to drive AI-based individual household energy optimization.

Measured but not digitized. Measurements that could support AI applications may be measured but not digitized. Digital instruments without connectivity, analog instrumentation and manual observations constitute much of this category. Examples include digital thermometers without internet connectivity, analog pressure gauges and visual observations of the weather.

Not measured. Facts and quantities that would be required to drive an AI application may not be measured at all. In these situations, the ideal outcome is to leapfrog to measured and well-digitized data.

You don’t see passages like this if the majority of the needed data sits in the first bullet point bucket above. It’s sobering to reflect the impact on us all as a species if instrumentation / telemetry of the energy sector continues to languish. Those who have spent any significant time in the data → AI game, particularly outside of Big Tech, will know the lengthy timelines involved in bringing meaningful change at scale with data and AI from a standing start.

In the chapter on Barriers, access to data and indeed just the availability of data is referenced again, reinforcing my feeling that we’ll need a big push on data collection before we will be able to get much of the AI magic on our side.

Looking for your keys under the streetlight

Most folk are now familiar with the challenge of the bias inherent in patchy training data flowing on to create model bias that can be extremely difficult to mitigate (think ChatGPT trained on Reddit threads and the stereotypical images of different nationalities generated by Midjourney). So it is interesting, and again sobering, to see the roadmap highlight the challenges of achieving holistic change based on patchy data which exists at much greater granularity for richer countries.

Economic Bias: AI solutions could be developed with economic motivations that prioritize wealthier nations or communities. As a result, mitigation strategies might overlook developing countries, which might be more vulnerable to climate change but less represented in global data sets, even when viable, cost-effective strategies are available.

Cultural Bias: Climate-related AI models might inadvertently prioritize or deprioritize certain mitigation strategies based on the cultural biases of the researchers or developers. This could overlook indigenous knowledge or local practices.

Feedback Loops: AI models that use real-time data to adapt can sometimes create feedback loops that reinforce existing biases. For example, if renewable energy installations are primarily in wealthier areas due to economic factors, an AI system optimizing for energy distribution might continue to prioritize these areas, further widening the gap.

I certainly can’t see an easy fix to this but I’m glad to see it being called out early rather than stumbled upon later! ‘Learning from the mistakes of others’ for the win!

GHG emissions monitoring

OK, some bright notes to contrast with the ‘patchy data’ doom and gloom. There is some neat stuff going on in emissions monitoring, and data capture and analysis is right at the cutting edge.

Greenhouse gases - predominately carbon dioxide and methane - have concentrations in our atmosphere that are higher now than at any point in human history. According to the roadmap, “carbon dioxide is responsible for roughly 76% of the warming impact of GHGs globally. Methane is responsible for roughly 18%”. (The balance is from nitrous oxide and fluorinated gases.)

Good information on the sources of greenhouse gas (GHG) emissions is essential for responding to climate change. Accurate and timely data are needed to design mitigation strategies, prioritize abatement opportunities and track the effectiveness of climate policies. Historically, however, data concerning sources of GHG emissions have often been partial and approximate, with significant time lags. In many cases, a lack of definitive information on GHG emissions has been an important hurdle to climate action.

To date, GHG emissions monitoring has been hard to do and heavily based on estimated emissions factors based on standard parameters for commonly used equipment and processes. Humans being humans, we know that what’s measured gets actioned and that measuring things in a timely fashion is what drives sustainable behaviour change. The roadmap comments that emissions factor based estimation both routinely underestimates total emissions and provides no incentive for improvement.

For example, a natural gas pipeline operator will be assigned the same level of emissions—based on pipeline length and diameter—whether or not its operators engage in routine venting, flaring or other climate-adverse, high-emitting and avoidable practices.

And for those of you thinking that carbon dioxide emissions can be tracked closely using fuel consumption and land use change data, you’re right but this is not the case with methane.

Methane emissions, in contrast, come from a range of sources (the energy sector, food system and waste management) and are much less correlated with consumption. Energy-related methane emissions are largely avoidable byproducts of fossil fuel production and transport, uncorrelated with consumption rates and very unevenly distributed across fossil-fuel supply chains.

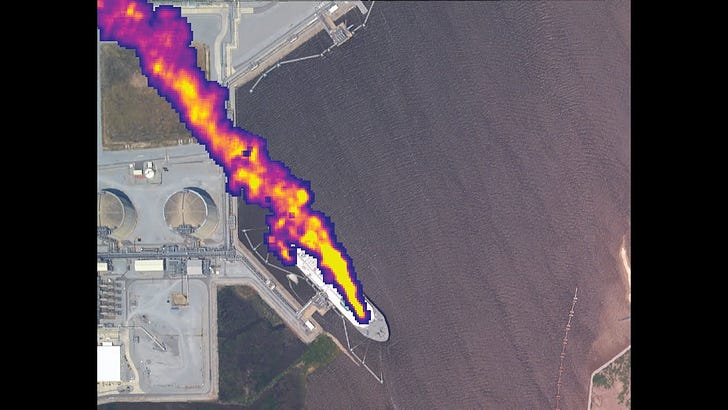

It was fascinating to read about two new satellite based initiatives to significantly increase the resolution - spatially and temporally - of emissions monitoring.

MethaneSAT will process observed spectrographic data to calculate quantitative emission rates, revealing how much methane is emitted and how it varies across the landscape. MethaneSAT analytics are developed with much lower latency than for other satellites. Our groundbreaking analytics will track emissions based on winds and atmospheric conditions, determining location and volume from individual point sources, as well as cumulative emissions across larger areas.

MethaneSAT website, January 2024

Pinpointing, quantifying and communicating methane emissions at the scale of individual facilities is increasingly urgent, particularly on the heels of unprecedented U.S. domestic and international commitments to curb methane. We can’t manage what we don’t measure, and increased transparency of super emitters across all sources via airborne and satellite observation is key to driving focused actions to reduce emissions.

Carbon Mapper website, January 2024

Much more use of satellites, drones and ground sensors for measurement is leading to much more data. But of course, we need to turn that data into information, a challenge that has been met and overcome before in sectors ranging from financial services to telecommunications to advertising. So some great opportunity for cross industry skills transfer!

Final thoughts

My deadline caught up with me before I could fully digest and summarise key points from the section on decarbonising the power sector but there was good stuff in there so if this is an area of interest to you, I’d recommend taking a read. The more I discover, the more I’m seeing the application of AI to power grid structure and operations as absolutely vital.

Two takeaways

I love how holistic this roadmap is and the fact that the lessons learned on poor and patchy data that have plagued other industries AI adoption are being discussed and presented right alongside the flashier stuff

There isn’t a lot of cutting edge AI in here (materials innovation excluded). In fact I reckon three years ago the roadmap might have had machine learning or even data science in the title. That’s not a negative per se - crack the nut with the lightest and most cost effective hammer that you can - but it does make me wonder if the emphasis on AI could prove unhelpful if it pulls this work into the ‘trough of disillusionment’ that will be coming for (Gen) AI in `24/`25

On a personal note, the readership of this blog has now reach 300+ views per post with 240 subscribers! That’s way more than I ever expected back in the early months of 2023 when I decided to scratch this authorship itch.

One thing I love a lot is that subscribed readers come from 23 different countries already! A shout out to my two subscribers in Romania, one in Nigeria and one in Morocco. Of those three countries, so far I’ve only made it to Morocco (which I loved). More international travel is definitely required!

A few of you have been kind enough to tell me that you enjoy the conversational style and the balanced view presented in these posts. Two folks told me they had a backlog of Data Runs Deep stories teed up to read over the New Year and I’ve been picturing mojitos on the deck and spiced tea by the fire ever since. Thank you very much for taking the time to let me know what you enjoy - it means a lot and puts some sparkles in my day.

This weekend I’ll be measuring up the area (rather heavily sloping unfortunately) where I want to establish an orchard in the winter. I hope each of you also finds at least a little time for your own passion project. Till next time.